OpenAI released GPT-5.5 on April 23, 2026. Within 24 hours, the model topped Terminal-Bench 2.0 at 82.7%, more than doubled GPT-5.4’s long-context retrieval score, and sparked a pricing debate that is still going on Reddit threads as you read this.

Here is why that matters if you run an OpenClaw AI agent: OpenAI’s GPT-5.5 uses roughly 40% fewer output tokens than GPT-5.4 to complete the same coding tasks. And if you already pay for a ChatGPT Plus subscription, you can connect GPT-5.5 to your OpenClaw agent on xCloud through OAuth without a single dollar in additional API costs.

In this guide, we will cover everything you need to know about OpenAI GPT-5.5: what it is, where it outperforms the competition, how much it costs, and how to get it running on your own OpenClaw AI agent in just a few minutes. Let us get started!

What Is OpenAI GPT-5.5?

GPT-5.5 is OpenAI’s newest flagship large language model, released on April 23, 2026. Internally codenamed “Spud,” it is the first fully retrained base model since GPT-4.5. OpenAI president Greg Brockman described it as “a faster, sharper thinker for fewer tokens” during the launch briefing.

But what makes OpenAI GPT-5.5 different from previous models? It was specifically built for agentic workloads. That means instead of just answering your questions, GPT-5.5 can:

- Plan multi-step tasks on its own

- Use tools like file systems, terminals, and APIs

- Check its own work and correct mistakes

- Navigate ambiguity without asking you to clarify everything

- Keep going until the task is actually finished

This is exactly the kind of behavior you want from an AI agent that operates through Telegram or WhatsApp using a framework like OpenClaw.

Two variants are available: GPT-5.5 Standard for advanced reasoning and complex agent tasks, and GPT-5.5 Mini for faster, more cost-efficient everyday automation:

GPT-5.5 (Standard): Available to ChatGPT Plus, Pro, Business, and Enterprise subscribers. This is the version most people should use.

GPT-5.5 Pro: Uses additional parallel computing for harder problems. Limited to Pro, Business, and Enterprise tiers. Best for deep research, hard math, and tasks where accuracy matters more than speed.

OpenAI GPT-5.5 Technical Specifications at a Glance

Here is a quick look at what OpenAI GPT-5.5 ships with:

| Specification | Detail |

| Release Date | April 23, 2026 |

| Context Window (API) | 1,050,000 tokens |

| Context Window (Codex) | 400,000 tokens |

| Max Output Tokens | 128,000 |

| Input Modalities | Text, Image, File |

| API Input Price | $5.00 per 1M tokens |

| API Output Price | $30.00 per 1M tokens |

| Batch/Flex Pricing | 50% of standard rate |

| Knowledge Cutoff | December 2025 |

| Reasoning Mode | Explicit chain-of-thought |

The 1M+ token context window is particularly noteworthy. It allows GPT-5.5 to process entire codebases, long research papers, or weeks of agent conversation history in a single session. And unlike many models that accept large inputs but struggle to actually retrieve information from them, OpenAI GPT-5.5 does this reliably. More on that in the benchmarks below.

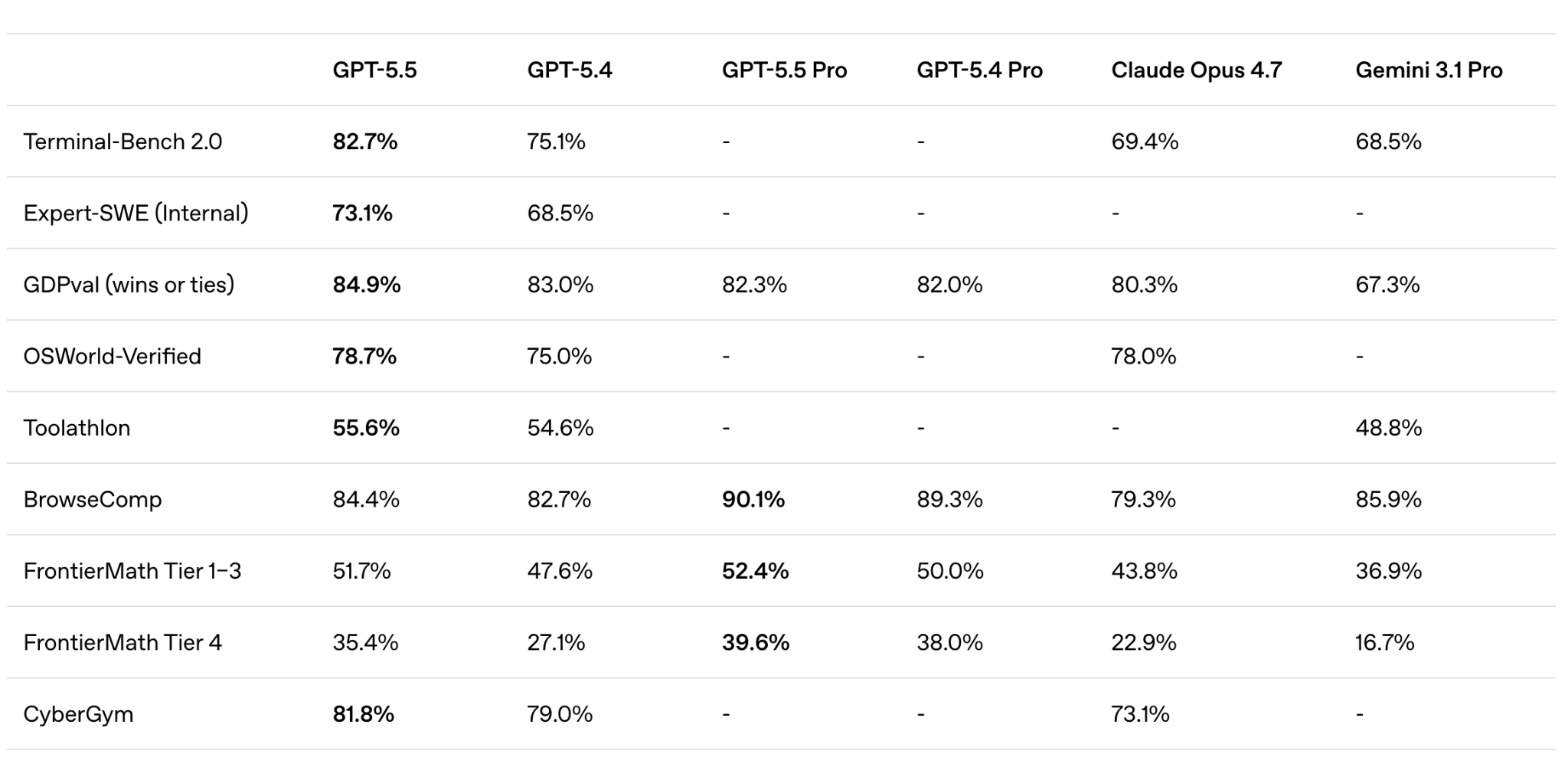

OpenAI GPT-5.5 Benchmarks: Full Breakdown

Recent benchmarks show that OpenAI GPT-5.5 is now outperforming every other model, including Claude Opus 4.7 and Gemini 3.1 Pro, across coding, professional, scientific, and cybersecurity categories. The numbers speak for themselves. Let us break them down.

OpenAI GPT-5.5 Coding Benchmarks

This is where GPT-5.5 really pulls ahead. On Terminal-Bench 2.0, which measures how well a model handles complex CLI workflows requiring planning and tool coordination, GPT-5.5 scored 82.7%, a full 13.3 points ahead of Claude Opus 4.7’s 69.4%.

| Eval | GPT-5.5 | GPT-5.4 | GPT-5.5 Pro | Claude Opus 4.7 | Gemini 3.1 Pro |

| SWE-Bench Pro | 58.6% | 57.7% | – | 64.3% | 54.2% |

| Terminal-Bench 2.0 | 82.7% | 75.1% | – | 69.4% | 68.5% |

| Expert-SWE (Internal) | 73.1% | 68.5% | – | – | – |

On BenchLM’s agentic tool use category, GPT-5.5 ranks #2 out of 115 models with an average score of 98.2. The model handles tool calls more consistently, is less likely to pass malformed arguments, and avoids looping on failed invocations.

If you are using OpenClaw for task automation, these improvements translate directly into fewer failed workflows and lower token costs.

“GPT-5.5 is noticeably smarter and more persistent than GPT-5.4, with stronger coding performance and more reliable tool use. It stays on task for significantly longer without stopping early, which matters most for the complex, long-running work our users delegate to Cursor.”

— Michael Truell, Co-founder & CEO at Cursor

“The first coding model I’ve used that has serious conceptual clarity.” — Dan Shipper, Founder and CEO of Every

OpenAI GPT-5.5 Professional & Knowledge Work Benchmarks

The same strengths that make GPT-5.5 great at coding also make it powerful for everyday work. The model is better at understanding intent and can move more naturally through the full loop of knowledge work: finding information, using tools, checking output, and turning raw material into something useful.

| Eval | GPT-5.5 | GPT-5.4 | GPT-5.5 Pro | Claude Opus 4.7 | Gemini 3.1 Pro |

| GDPval (wins/ties) | 84.9% | 83.0% | 82.3% | 80.3% | 67.3% |

| FinanceAgent v1.1 | 60.0% | 56.0% | – | 64.4% | 59.7% |

| Investment Banking (Internal) | 88.5% | 87.3% | 88.6% | – | – |

| OfficeQA Pro | 54.1% | 53.2% | – | 43.6% | 18.1% |

| Tau2-bench Telecom | 98.0% | 92.8% | – | – | – |

More than 85% of OpenAI’s own employees now use Codex with GPT-5.5 every week across departments. Some real examples from their teams:

- Finance team: Used Codex to review 24,771 K-1 tax forms totaling 71,637 pages, accelerating the task by two weeks compared to the prior year

- Communications team: Used GPT-5.5 to analyze six months of speaking request data, build a scoring and risk framework, and automate low-risk approvals via a Slack agent

- Go-to-Market team: Automated weekly business reports, saving 5-10 hours per week

“GPT-5.5 delivers the sustained performance required for execution-heavy work. Built and served on NVIDIA GB200 NVL72 systems, the model enables our teams to ship end-to-end features from natural language prompts, cut debug time from days to hours, and turn weeks of experimentation into overnight progress in complex codebases.” — Justin Boitano, VP of Enterprise AI at NVIDIA

OpenAI GPT-5.5 Tool Use Benchmarks

| Eval | GPT-5.5 | GPT-5.4 | GPT-5.5 Pro | Claude Opus 4.7 | Gemini 3.1 Pro |

| BrowseComp | 84.4% | 82.7% | 90.1% | 79.3% | 85.9% |

| MCP Atlas | 75.3% | 70.6% | – | 79.1% | 78.2% |

| Toolathlon | 55.6% | 54.6% | – | – | 48.8% |

OpenAI GPT-5.5 Scientific & Academic Benchmarks

GPT-5.5 shows meaningful gains on scientific research workflows. On GeneBench, which focuses on multi-stage scientific data analysis in genetics and quantitative biology, GPT-5.5 clearly outperforms GPT-5.4. These problems require models to reason about ambiguous data, address hidden confounders, and correctly implement modern statistical methods. Tasks here often correspond to multi-day projects for scientific experts.

| Eval | GPT-5.5 | GPT-5.4 | GPT-5.5 Pro | Claude Opus 4.7 | Gemini 3.1 Pro |

| GeneBench | 25.0% | 19.0% | 33.2% | – | – |

| FrontierMath Tier 1-3 | 51.7% | 47.6% | 52.4% | 43.8% | 36.9% |

| FrontierMath Tier 4 | 35.4% | 27.1% | 39.6% | 22.9% | 16.7% |

| BixBench | 80.5% | 74.0% | – | – | – |

| GPQA Diamond | 93.6% | 92.8% | – | 94.2% | 94.3% |

| Humanity’s Last Exam (with tools) | 52.2% | 52.1% | 57.2% | 54.7% | 51.4% |

An internal version of GPT-5.5 with a custom harness also contributed to a new mathematical proof about off-diagonal Ramsey numbers, one of the central objects in combinatorics. The proof was later verified in Lean, a formal proof verification system.

“It’s incredibly energizing to use OpenAI’s new GPT-5.5 model in our harness, have it reason over massive biochemical datasets to predict human drug outcomes, and then see it deliver significant accuracy gains on our hardest drug discovery evals.” — Brandon White, Co-Founder & CEO at Axiom Bio

OpenAI GPT-5.5 Cybersecurity Benchmarks

GPT-5.5 shows a step up in cybersecurity capabilities compared to GPT-5.4. OpenAI has classified these capabilities as “High” under their Preparedness Framework, though below the “Critical” threshold.

| Eval | GPT-5.5 | GPT-5.4 | Claude Opus 4.7 |

| Capture-the-Flag Tasks (Internal) | 88.1% | 83.7% | – |

| CyberGym | 81.8% | 79.0% | 73.1% |

OpenAI GPT-5.5 Long-Context Benchmarks: The Number That Changes Everything for AI Agents

This is the benchmark category that matters most for AI agents like OpenClaw. It measures whether a model can actually retrieve information placed deep in long context, not just accept large inputs.

| Eval | GPT-5.5 | GPT-5.4 | Claude Opus 4.7 |

| MRCR v2 8-needle 4K-8K | 98.1% | 97.3% | – |

| MRCR v2 8-needle 16K-32K | 96.5% | 97.2% | – |

| MRCR v2 8-needle 64K-128K | 83.1% | 86.0% | – |

| MRCR v2 8-needle 128K-256K | 87.5% | 79.3% | 59.2% |

| MRCR v2 8-needle 256K-512K | 81.5% | 57.5% | – |

| MRCR v2 8-needle 512K-1M | 74.0% | 36.6% | 32.2% |

The pattern is clear: OpenAI GPT-5.5’s long-context advantage kicks in above 128K tokens and becomes dominant at 256K+. At the 512K-1M range, it more than doubles both GPT-5.4 and Claude’s scores.

What does this mean for your OpenClaw AI agent? It is the difference between an agent that remembers what you discussed three days ago and one that silently forgets. This directly affects the quality of proactive agent workflows that need to reference accumulated context over time.

OpenAI GPT-5.5 Abstract Reasoning Benchmarks

| Eval | GPT-5.5 | GPT-5.4 | GPT-5.5 Pro | Claude Opus 4.7 | Gemini 3.1 Pro |

| ARC-AGI-1 (Verified) | 95.0% | 93.7% | – | 93.5% | 98.0% |

| ARC-AGI-2 (Verified) | 85.0% | 73.3% | – | 75.8% | 77.1% |

Where GPT 5.5 is Still Behind Claude

GPT-5.5 does not sweep every category. It is important to be honest about this:

- SWE-bench Pro: Claude Opus 4.7 leads with 64.3% vs 58.6%. This benchmark focuses on resolving real GitHub issues across complex codebases.

- MCP-Atlas: Claude scores 79.1% vs 75.3%, testing tool protocol integration.

- GPQA Diamond: Claude scores 94.2% vs 93.6%.

- FinanceAgent: Claude leads with 64.4% vs 60.0%.

- Creative writing & conversational tone: GPT-5.5 was optimized for execution, not personality. For customer-facing agents where warmth matters, Claude can feel more natural.

The honest take: performance differences between these frontier models are measured in single-digit percentage points on most benchmarks. Each model has strengths. None is a universal winner.

OpenAI GPT-5.5 Token Efficiency: Why It Matters More Than You Think

OpenAI reports that GPT-5.5 uses roughly 40% fewer output tokens than GPT-5.4 on equivalent Codex tasks. Independent testing from MindStudio puts the gap against Claude Opus 4.7 even wider: GPT-5.5 produces approximately 72% fewer output tokens on the same coding tasks.

Why should you care? Because AI agent workflows chain dozens or hundreds of steps per task. Each step generates output tokens that:

- Cost money (output tokens are priced higher than input tokens)

- Fill the context window (once full, the agent either resets or degrades in quality)

- Add latency (more tokens = slower responses)

A model that generates 3x the tokens per step hits context limits sooner, costs more per task, and runs slower. At scale, the difference between a concise model and a verbose one can be thousands of dollars per month.

For advanced OpenClaw sub-agent configurations where multiple agents coordinate on a single project, token efficiency becomes even more important. GPT-5.5’s efficiency means your OpenClaw agents can complete more steps within the same context budget before hitting any failure modes.

OpenAI GPT-5.5 Pricing: What It Actually Costs

Let us talk numbers. The API pricing for OpenAI GPT-5.5 is $5 per million input tokens and $30 per million output tokens. That is roughly double GPT-5.4’s rates.

Before that scares you, consider the token efficiency side. According to analysis from Build Fast with AI, the real-world cost increase is around 20% once you account for fewer tokens per task. Here is how the math works:

A task that required 1,000 output tokens on GPT-5.4 cost $0.015. If GPT-5.5 completes the same task in 600 tokens (40% fewer), the cost is $0.018. That is a 20% increase, not a 100% increase.

Here is a comparison of current frontier model pricing:

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

| OpenAI GPT-5.5 | $5.00 | $30.00 |

| OpenAI GPT-5.5 Pro | $30.00 | $180.00 |

| Claude Opus 4.7 | $5.00 | $25.00 |

| OpenAI GPT-5.4 | $2.50 | $15.00 |

Claude Opus 4.7 is 17% cheaper on output per token, but Anthropic’s newer tokenizer uses 1.0 to 1.35x more tokens per input. So the per-task economics are closer than the per-token prices suggest.

OpenAI GPT-5.5 Availability by Plan

Here is a quick breakdown of where OpenAI GPT-5.5 is available:

| Plan | ChatGPT (GPT-5.5 Thinking) | ChatGPT (GPT-5.5 Pro) | Codex |

| Free | ❌ | ❌ | ❌ |

| Plus ($20/mo) | ✅ | ❌ | ✅ |

| Pro ($200/mo) | ✅ | ✅ | ✅ |

| Business | ✅ | ✅ | ✅ |

| Enterprise | ✅ | ✅ | ✅ |

| Edu | ❌ | ❌ | ✅ |

| Go | ❌ | ❌ | ✅ |

In Codex, GPT-5.5 is also available in Fast mode, generating tokens 1.5x faster for 2.5x the cost. The context window in Codex is 400K tokens (vs 1M in the API).

Connect OpenAI GPT-5.5 to Your OpenClaw AI Agent on xCloud: No Extra API Cost

This is where things get really interesting for xCloud users. If you already have a ChatGPT Plus ($20/month) or ChatGPT Pro ($200/month) subscription, you can use OpenAI GPT-5.5 with your OpenClaw agent on xCloud through the Codex OAuth route. No separate API key needed. No per-token billing. OpenAI explicitly supports this for external tools like OpenClaw.

Your subscription’s included quota covers the usage. For most users running a personal OpenClaw AI agent, this is the most affordable way to access the strongest model available.

Here is the cost breakdown:

- ChatGPT Plus: $20/month

- xCloud OpenClaw Hosting: Starting at $24/month

- Total: $44/month for OpenAI GPT-5.5 on your own OpenClaw AI agent

- Additional API costs: $0

Compare that to API pricing at even moderate usage (10M output tokens/month): $300/month just for the API, plus hosting on top. The subscription route saves hundreds of dollars per month.

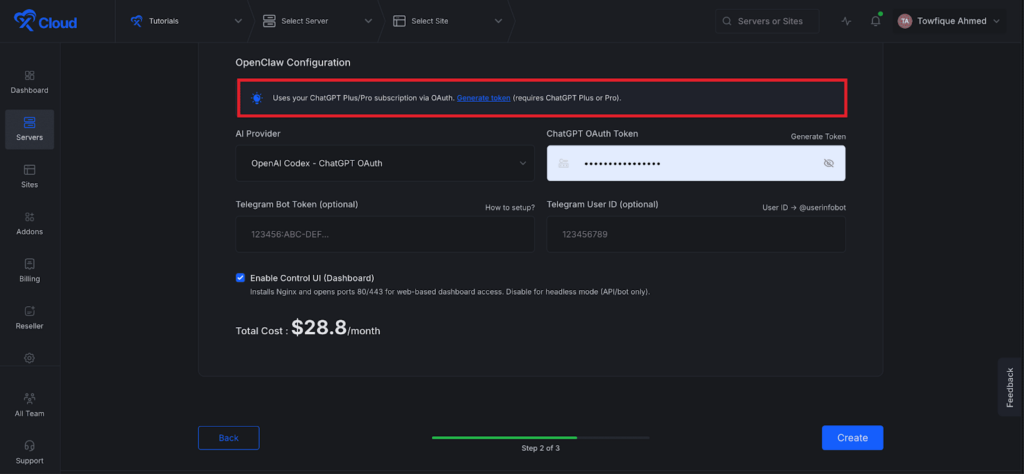

How to Connect OpenAI Codex to OpenClaw With xCloud

OpenAI Codex lets you use your existing ChatGPT Plus or Pro subscription as the AI provider for your OpenClaw agent – no separate API key or billing required. xCloud includes a built-in Codex OAuth token generator that handles the authentication flow securely inside the dashboard.

Follow the steps below to connect OpenAI Codex to xCloud easily.

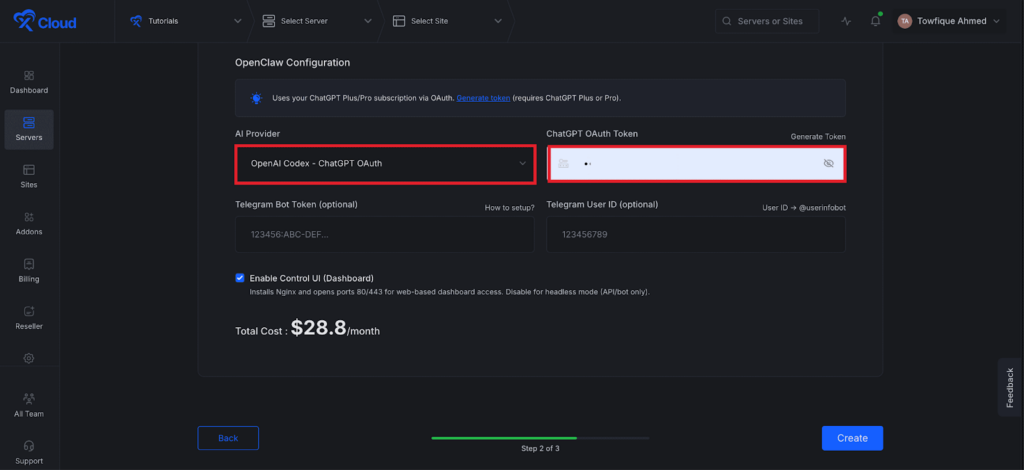

Step 1: Open the OpenClaw Provider Settings

Log in to your xCloud OpenClaw dashboard. Navigate to your OpenClaw → Providers option from the xCloud dashboard. In the OpenClaw Configuration panel, look for the AI provider selector.

If you are creating the new OpenClaw instance, just choose the ‘OpenAI Codex – CHATGPT OAuth’ from the ‘AI Provider dropdown menu.

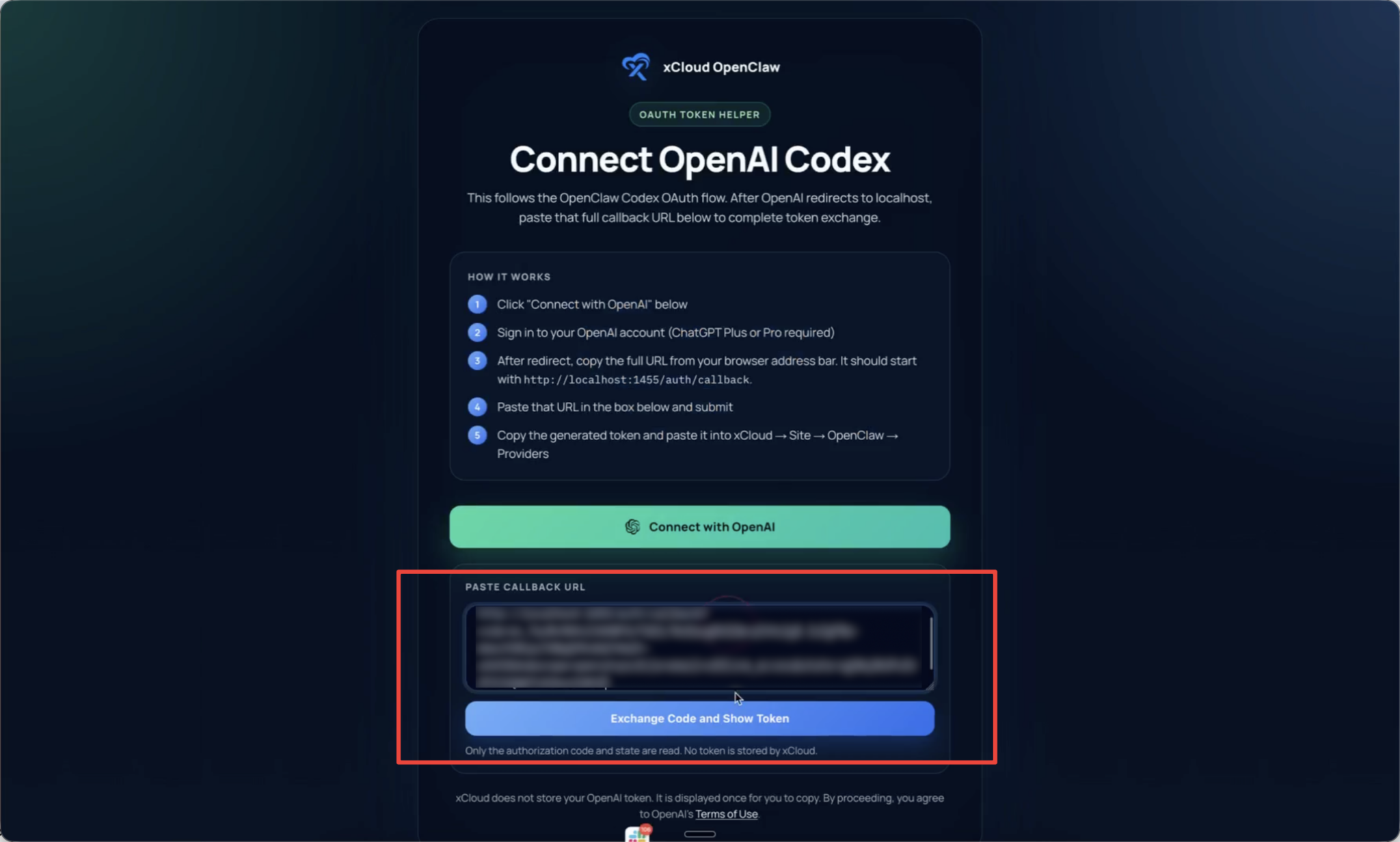

Step 2: Set up Your OAuth Token

Next, you can to set up OpenAI Codex in just a few clicks. Click on the ‘Generate Token’ link to proceed.

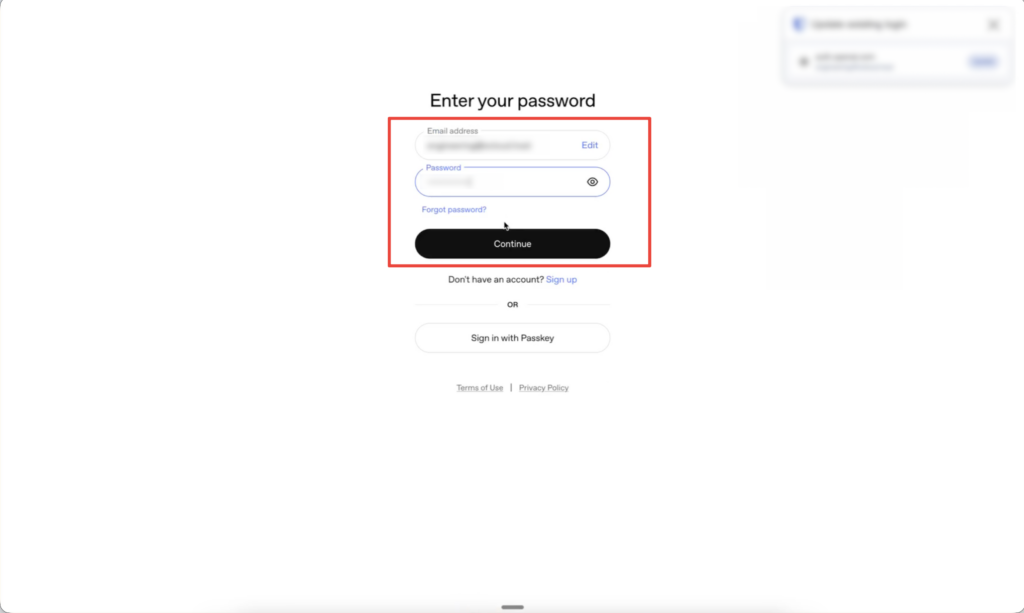

Step 3: Set up OpenAI Account

You will be redirected to the integration page of the OpenAI Codex page.

Read the ‘How It Works’ information carefully. And click on the ‘Connect with OpenAI’ button.

Next, you will be redirected to the OpenAI login page. Here, sign in to your OpenAI account (ChatGPT Plus or Pro required) using your credentials.

Then, you will see an error page after the login process has been completed. Copy the URL from the browser’s Address bar from that page.

Next, go back to the OpenAI Codex setup page and paste that URL in the box below and click on the ‘Exchange Code and Show Token’.

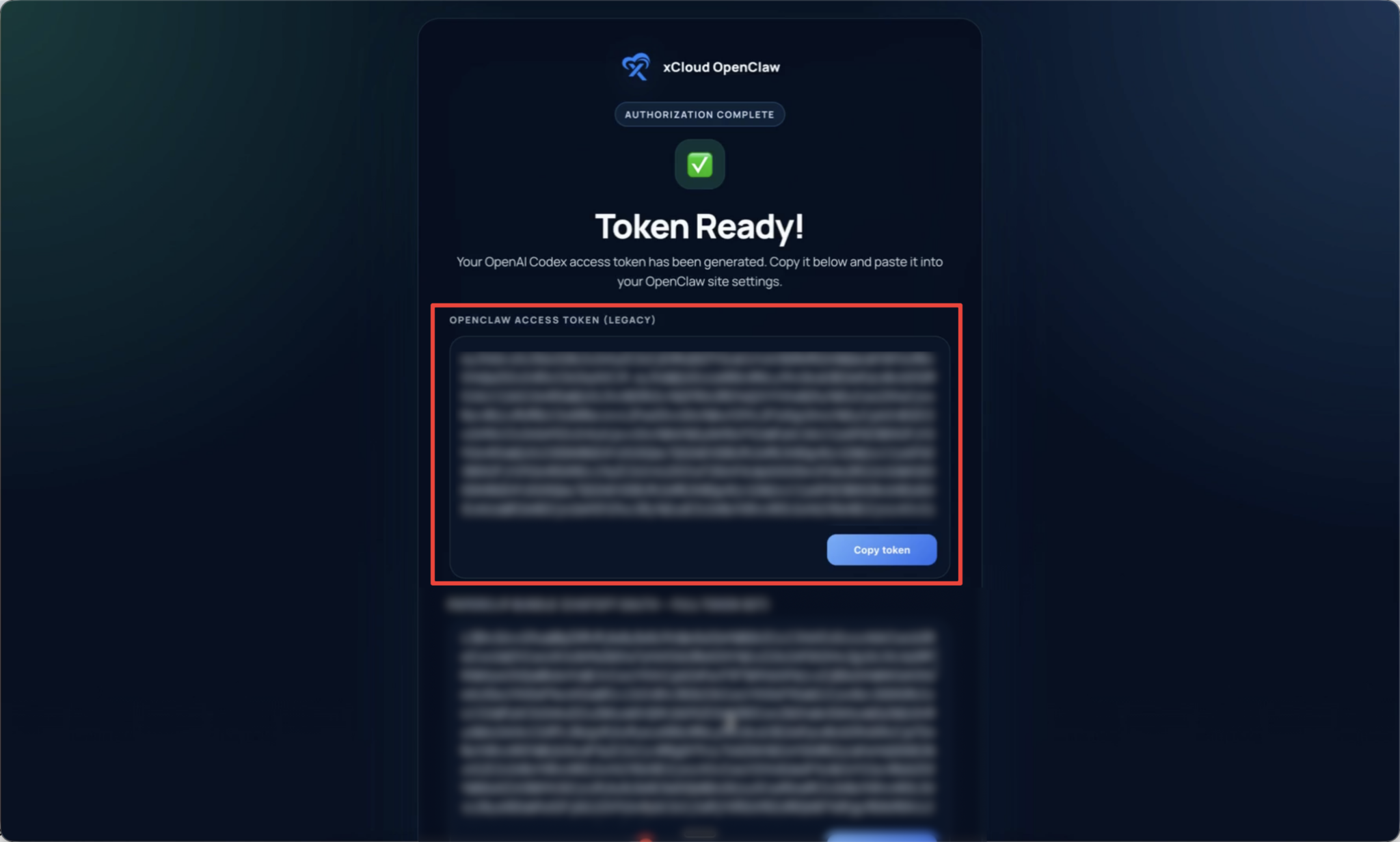

Then you will get a token. Click on the ‘Copy Token’ button to proceed.

Step 4: Paste the Callback URL and Get Your Token

Return to the xCloud tab with the OpenClaw configuration. Paste the full callback URL into the input field provided. Click Submit. xCloud will process the OAuth exchange and display your generated token

That is it! Your OpenClaw agent will now use OpenAI Codex (backed by your ChatGPT Plus/Pro subscription) as its AI provider. Send a message to your bot on Telegram or WhatsApp — the response should come from GPT-5.5.

👉 For detailed instructions, follow the step-by-step deployment guide.

Why OpenAI GPT-5.5 Matters for Your OpenClaw AI Agent

If you are running an OpenClaw AI agent on xCloud, the underlying model determines how well your agent performs every single task. Here is why OpenAI GPT-5.5 makes a real difference:

- Token Efficiency: Your OpenClaw agent completes more steps before hitting context limits, which means fewer failures and lower costs per task.

- Long-Context Retrieval: At 74.0% on MRCR v2 (vs 32.2% for Claude), OpenAI GPT-5.5 does not lose track of information deep in context. Your OpenClaw agent actually remembers what you discussed days ago.

- Agentic Reasoning: GPT-5.5 handles ambiguous, multi-part tasks with less guidance. Give it a messy request, and it plans the steps, uses tools, checks its work, and keeps going.

- Faster Responses: First-token latency is down 20-30% compared to GPT-5.4, and total generation is faster. For OpenClaw agents chaining dozens of tool calls, this compounds into meaningfully faster task completion.

These capabilities are what make the difference between a chatbot that answers questions and an OpenClaw AI agent that actually automates your work.

OpenAI GPT-5.5 vs Claude Opus 4.7: Which One Should You Run on OpenClaw?

Both models are available through OpenClaw on xCloud. The right choice depends on what your AI agent does.

Choose OpenAI GPT-5.5 when:

- Your workflows involve long context (large documents, extended agent sessions, multi-day histories)

- You run high-volume agentic tasks where token efficiency matters

- Terminal and CLI operations are part of your workflow

- Math or scientific reasoning is needed

- You already have a ChatGPT subscription and want zero additional API costs

Choose Claude Opus 4.7 when:

- Your tasks focus on code comprehension across large, complex repositories

- You need the model to follow nuanced, multi-step natural language instructions closely

- Conversational quality and warmth matter more than execution speed

Run both with OpenClaw multi-model routing: OpenClaw supports sub-agent configurations where different models handle different tasks. Assign OpenAI GPT-5.5 to your research and DevOps sub-agents. Assign Claude to your coding comprehension sub-agent. Let the main orchestrator delegate based on task type. Users on xCloud are already running setups like this.

For a broader comparison of agent platforms, see the OpenClaw vs Paperclip vs Hermes guide.

Best AI Agent Hosting Providers for Running OpenAI GPT-5.5 With OpenClaw in 2026

Choosing the right AI agent hosting provider is just as important as choosing the right model. Your hosting infrastructure determines uptime, security, latency, and how quickly you can get your OpenClaw AI agent live. Here is how the best OpenClaw hosting providers compare in 2026:

| Provider | Setup Time | Hosting Type | OpenClaw Pre-Installed | Starting Price |

| xCloud | ~5 minutes | Fully Managed | ✅ | $24/month |

| DigitalOcean | ~15-30 minutes | 1-Click Deploy | ✅ | $24/month + droplet |

| Hostinger | ~10 minutes | 1-Click VPS | ✅ | $6.99/month |

| Self-Hosted (VPS) | 1-3 hours | Manual Setup | ❌ | $5-12/month |

xCloud is the only truly managed AI agent hosting service for OpenClaw. You sign up, xCloud deploys a dedicated server with OpenClaw pre-installed, configures SSL, sets up Telegram and WhatsApp integrations, and handles all ongoing maintenance including updates, backups, and security hardening. Your OpenClaw instance runs in an isolated, dedicated environment with enterprise-grade encryption. The built-in Codex OAuth token generator makes connecting OpenAI GPT-5.5 to your agent a four-step process with zero API costs.

- DigitalOcean offers a 1-Click Deploy marketplace app with security hardening included. It sits between fully managed and self-hosted — faster than a raw VPS setup, but you still handle server maintenance, updates, and troubleshooting yourself.

- Hostinger provides one-click OpenClaw VPS hosting at one of the lowest price points available. It includes pre-integrated AI credits, so you do not need to set up separate API keys. Full root access is available for developers who want control.

- Self-hosted on a VPS (Vultr, Hetzner, AWS, or any Ubuntu server) gives you maximum control but requires comfort with Docker, terminal commands, SSL configuration, and ongoing maintenance. As the managed vs self-hosting comparison explains, the real cost of self-hosting is not the VPS bill — it is the time spent on DevOps instead of using your AI agent.

For teams that want to combine OpenClaw with workflow automation, xCloud also supports OpenClaw + n8n integration on a single server, letting you offload repetitive tasks to n8n and reserve OpenAI GPT-5.5 for decisions that need intelligence.

Bottom line: If you want your OpenClaw AI agent running OpenAI GPT-5.5 in five minutes with zero infrastructure management, xCloud OpenClaw Hosting is the fastest path.

OpenAI GPT-5.5 Next-Generation Inference Efficiency

One detail that often gets overlooked: OpenAI GPT-5.5 matches GPT-5.4’s per-token latency despite being a more capable model. That is unusual. Larger, more capable models are typically slower to serve, but OpenAI co-designed GPT-5.5 with NVIDIA GB200 and GB300 NVL72 systems to avoid that trade-off.

Here is a fascinating example of the model improving its own infrastructure: Codex (running GPT-5.5) analyzed weeks of production traffic patterns and wrote custom heuristic algorithms to optimally partition and balance work across computing cores. The result was a 20%+ increase in token generation speeds. The model helped improve the infrastructure that serves it.

On Artificial Analysis’s Coding Index, OpenAI GPT-5.5 delivers state-of-the-art intelligence at half the cost of competitive frontier coding models. For OpenClaw agent workloads where every millisecond of latency compounds across dozens of tool calls, this efficiency translates directly into faster task completion.

OpenAI GPT-5.5 Safety & Safeguards

OpenAI released GPT-5.5 with its most extensive safety evaluation to date. The model went through the full Preparedness Framework, targeted red-teaming for cybersecurity and biology capabilities, and feedback from nearly 200 early-access partners.

Key safety details:

- Preparedness classification: Biological/chemical and cybersecurity capabilities rated as “High” (below the “Critical” threshold that would restrict deployment)

- CoT controllability: Lower than GPT-5.4, meaning the model is less able to reshape its reasoning to evade safety monitors (OpenAI treats this as a safety feature)

- Stricter classifiers: OpenAI deployed tighter controls around higher-risk activity and sensitive cyber requests, and added protections for repeated misuse

OpenAI GPT-5.5 Cybersecurity: Trusted Access for Cyber

Frontier models are becoming increasingly capable of finding and patching security vulnerabilities. OpenAI is taking a proactive approach:

- Trusted Access for Cyber is available through Codex, offering verified users expanded access to GPT-5.5’s advanced cybersecurity capabilities with fewer restrictions

- Organizations defending critical infrastructure can apply to access cyber-permissive models like GPT-5.4-Cyber, subject to strict security requirements

- Government partnerships are being explored to help protect critical infrastructure for the public

- Users can apply for trusted access at ChatGPT to reduce unnecessary refusals for verified defensive work

API access launched one day after the consumer release because OpenAI needed to apply different safeguards for API deployments.

OpenAI GPT-5.5 Known Limitations

No model is perfect, and it is important to set the right expectations:

- Mid-range context (16K-64K tokens): OpenAI GPT-5.5 performs slightly below GPT-5.4 in this range, according to o-mega’s analysis. If your workload consistently operates here (many RAG systems do), performance may be marginally lower.

- Creative writing: GPT-5.5 was optimized for execution, not expression. It can feel more mechanical for creative tasks than both Claude and GPT-5.4.

- Weekly caps on subscription route: The ChatGPT Plus OAuth path has usage limits. Power users running dozens of automated tasks daily may need ChatGPT Pro ($200/month) or a direct OpenAI API key for higher limits.

Deploy Your OpenClaw AI Agent With GPT-5.5 on xCloud Today

Whether you are just starting or already using OpenClaw, getting GPT-5.5 live is quick.

New to OpenClaw? Start now with xCloud OpenClaw Hosting. Plans start at $24 per month. You get a ready OpenClaw agent with GPT-5.5 on Telegram in minutes. No setup, no hassle.

Already using OpenClaw on xCloud? Just complete the quick OAuth setup. Switch to GPT-5.5 instantly without changing anything else.

👉 Deploy GPT-5.5 on OpenClaw — Starting at $24/month

Frequently Asked Questions About OpenAI GPT-5.5

Is OpenAI GPT-5.5 the strongest AI model available in 2026?

GPT-5.5 leads on more major benchmarks than any other model released in 2026, including Terminal-Bench 2.0 (82.7%), FrontierMath Tier 4 (39.6% via Pro), MRCR v2 long-context retrieval (74.0%), GDPval knowledge work (84.9%), and OSWorld computer use (78.7%). It ranks #3 overall on BenchLM’s leaderboard out of 115 models. Claude Opus 4.7 outperforms it on SWE-bench Pro and MCP-Atlas. No single model wins everything, but OpenAI GPT-5.5 holds the top position in more categories than any competitor.

Can I use OpenAI GPT-5.5 with OpenClaw without paying for API credits?

Yes! Connect your existing ChatGPT Plus ($20/month) or Pro ($200/month) subscription to your OpenClaw AI agent through the Codex OAuth route. This uses your subscription’s included quota instead of per-token API billing. xCloud makes this OpenClaw setup straightforward through the control panel.

How much does OpenAI GPT-5.5 cost through the API?

$5 per million input tokens and $30 per million output tokens. Batch and Flex processing runs at half the standard rate. GPT-5.5 Pro costs $30/$180 per million tokens. Full details on OpenAI’s pricing page.

What is the context window for OpenAI GPT-5.5?

1,050,000 tokens through the API and 400,000 through Codex. This is large enough to process entire codebases, multi-document research sets, or extended OpenClaw agent conversation histories in a single session.

How does OpenAI GPT-5.5 compare to GPT-5.4?

GPT-5.5 is a full retrain, not an incremental update. Long-context retrieval more than doubled (74.0% vs 36.6% MRCR v2). Terminal-Bench improved from 75.1% to 82.7%. Token efficiency improved by roughly 40% on equivalent tasks. Per-token API pricing doubled, but the real-world cost increase is around 20% after accounting for token efficiency gains.

Does OpenAI GPT-5.5 work on Telegram and WhatsApp through OpenClaw?

Yes! OpenClaw on xCloud comes with Telegram and WhatsApp integrations pre-configured. Once OpenAI GPT-5.5 is connected through OAuth, all conversations on these platforms use the model.

Should I switch from Claude to OpenAI GPT-5.5 for my OpenClaw AI agent?

It depends on what your OpenClaw agent does. OpenAI GPT-5.5 is stronger at long-context tasks, terminal operations, and math. Claude Opus 4.7 is better at code comprehension and following complex instructions. Many OpenClaw users run both through sub-agents, assigning each model to the tasks it handles best.

Can I use OpenAI GPT-5.5 with n8n automation on xCloud?

Yes! If you run OpenClaw + n8n on xCloud, OpenAI GPT-5.5 powers the reasoning and decision-making layer while n8n handles deterministic workflow execution. This combination reduces token costs by offloading repetitive tasks to n8n.

What is the difference between OpenAI GPT-5.5 and GPT-5.5 Pro?

Same underlying model, different compute allocation. GPT-5.5 Pro applies additional parallel test-time compute to harder problems. It scores higher on difficult math (39.6% vs 35.4% on FrontierMath Tier 4) and deep research tasks (90.1% vs 83.4% on BrowseComp). Pro is limited to ChatGPT Pro, Business, and Enterprise tiers.

Is it safe to give OpenAI GPT-5.5 access to my tools and systems through OpenClaw?

Your OpenClaw instance runs on a dedicated, isolated server on xCloud with enterprise-grade encryption, automatic SSL certificates, and daily encrypted backups. OpenAI evaluated GPT-5.5 through its Preparedness Framework and deployed stricter classifiers for high-risk requests. For additional security, follow the principle of least privilege and only grant your OpenClaw agent the permissions it needs. See the managed vs self-hosting comparison for details on security architecture.

What are the best AI agent hosting providers for OpenClaw in 2026?

The best OpenClaw hosting providers in 2026 include xCloud (fully managed, $24/month), DigitalOcean (1-Click Deploy), Hostinger (budget VPS), and self-hosted options on Vultr, Hetzner, or AWS. xCloud is the only truly managed option that handles all infrastructure, updates, security, and messaging integrations automatically — making it the fastest way to get OpenAI GPT-5.5 running on your OpenClaw AI agent.

If you have found this blog helpful, feel free to subscribe to our blogs for valuable tutorials, guides, knowledge, and tips on web hosting and server management. You can also join our Facebook community to share insights and take part in discussions.